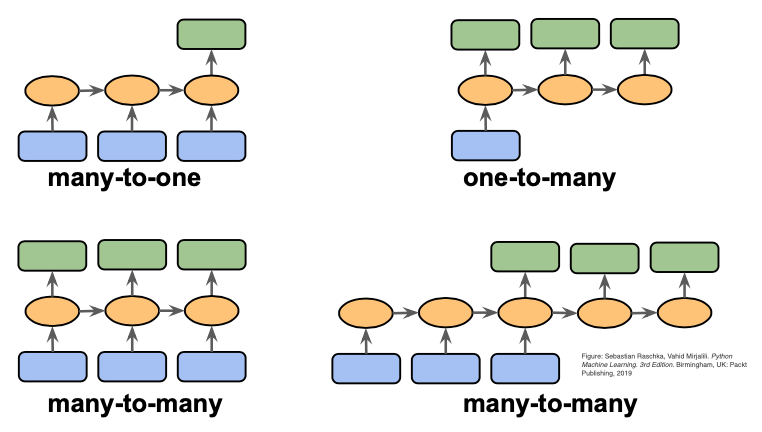

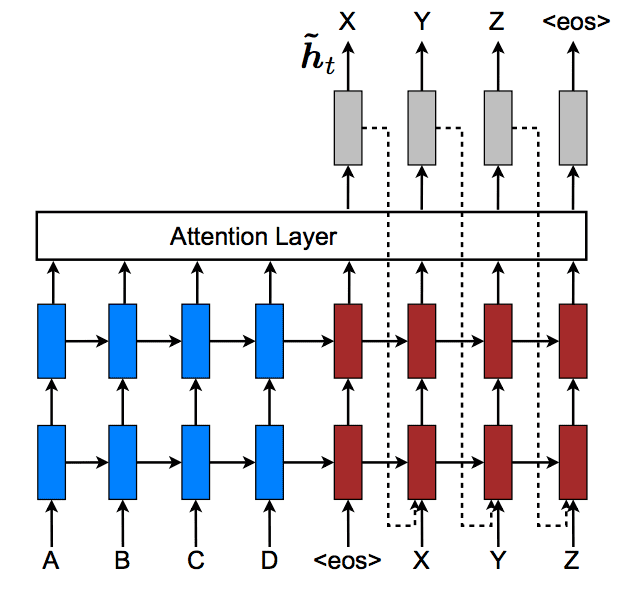

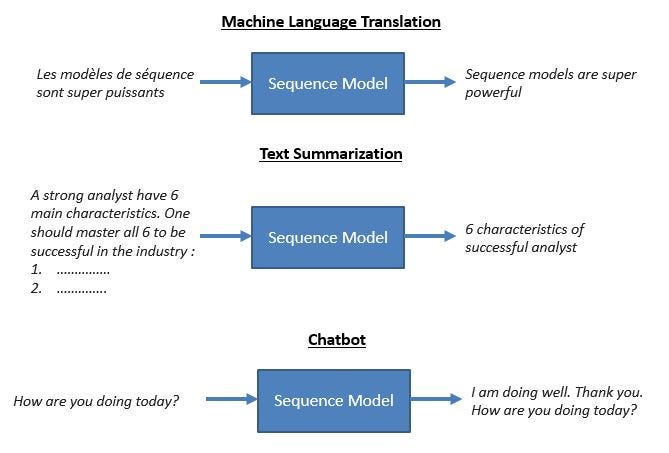

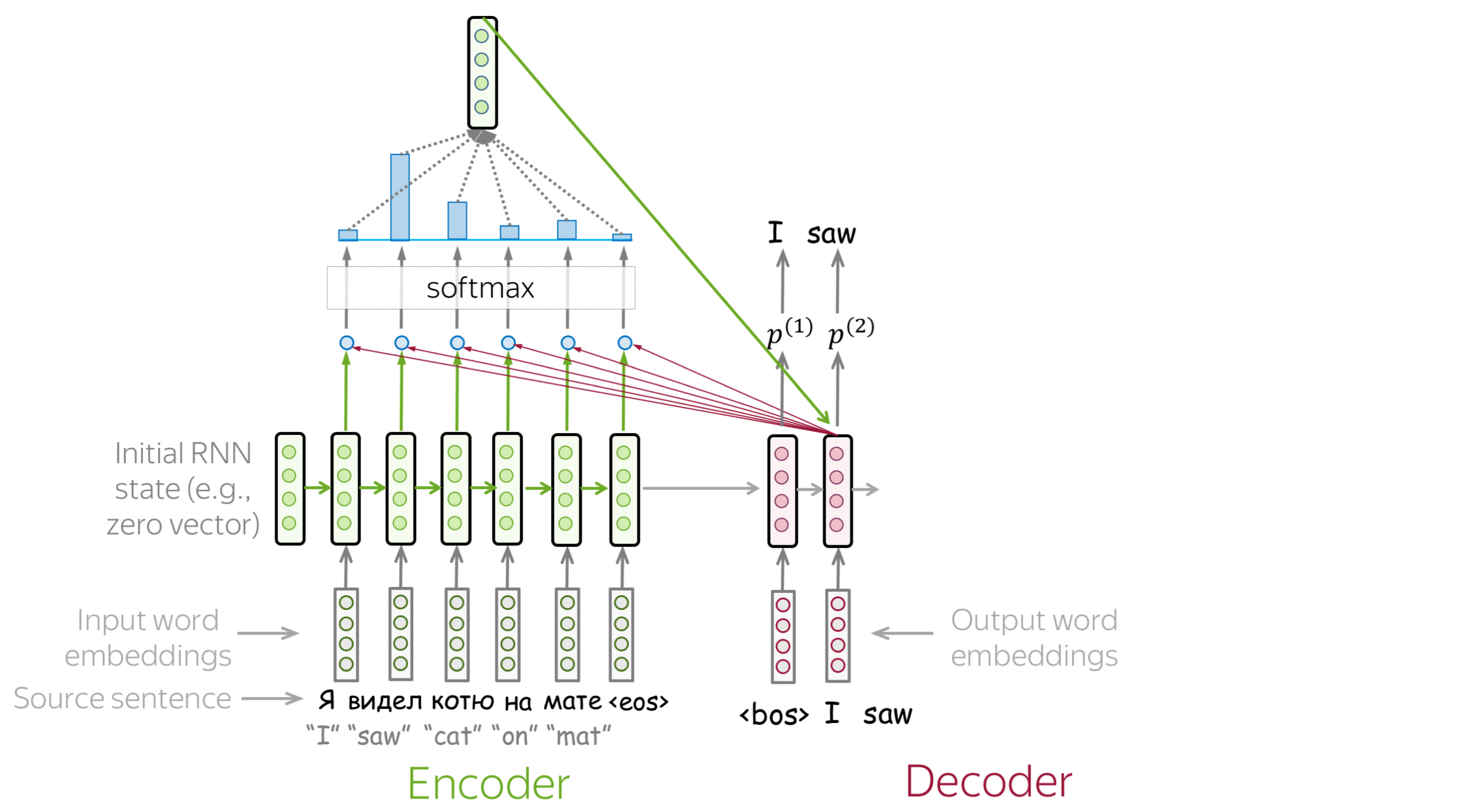

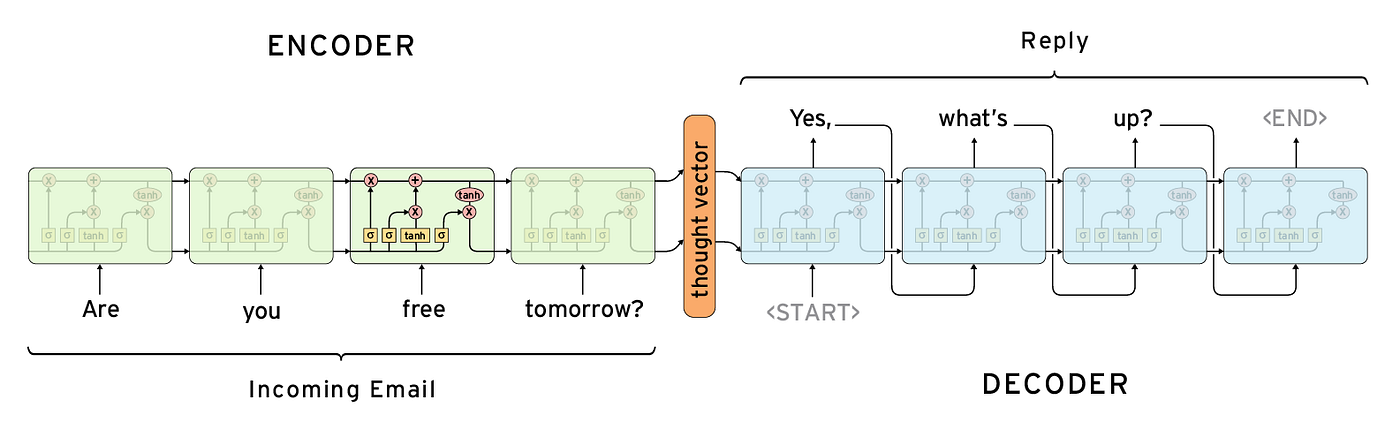

How Attention works in Deep Learning: understanding the attention mechanism in sequence models | AI Summer

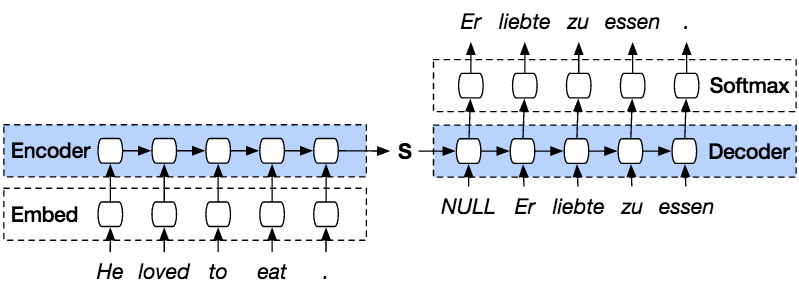

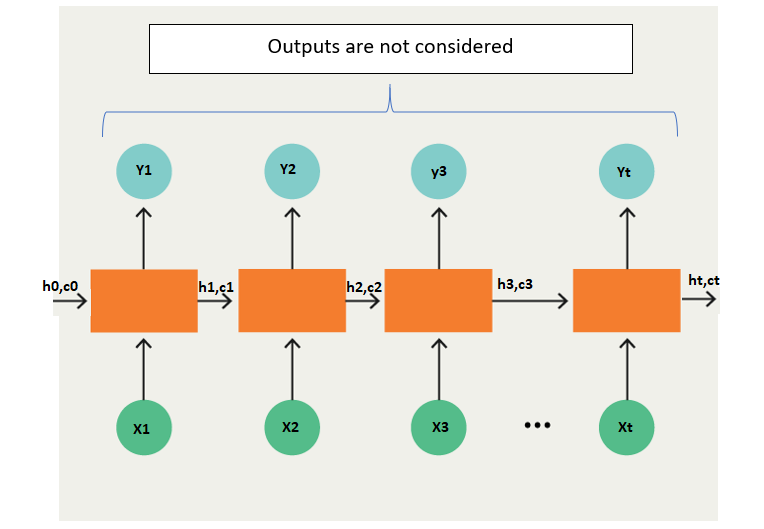

Introducing tf-seq2seq: An Open Source Sequence-to-Sequence Framework in TensorFlow – Google AI Blog

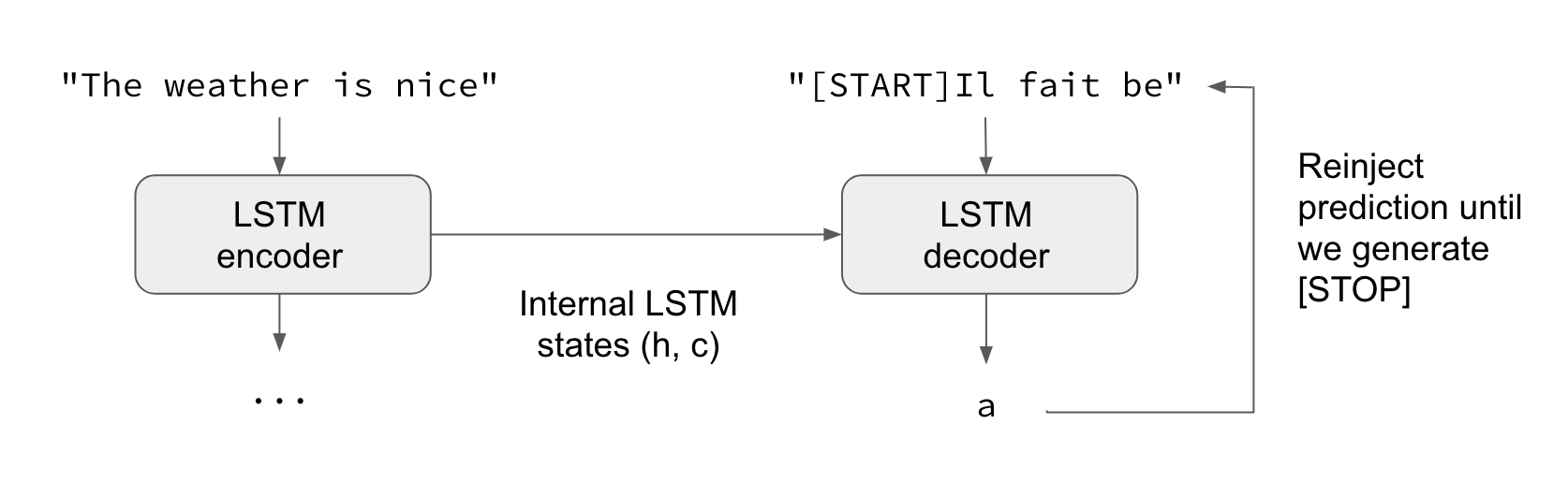

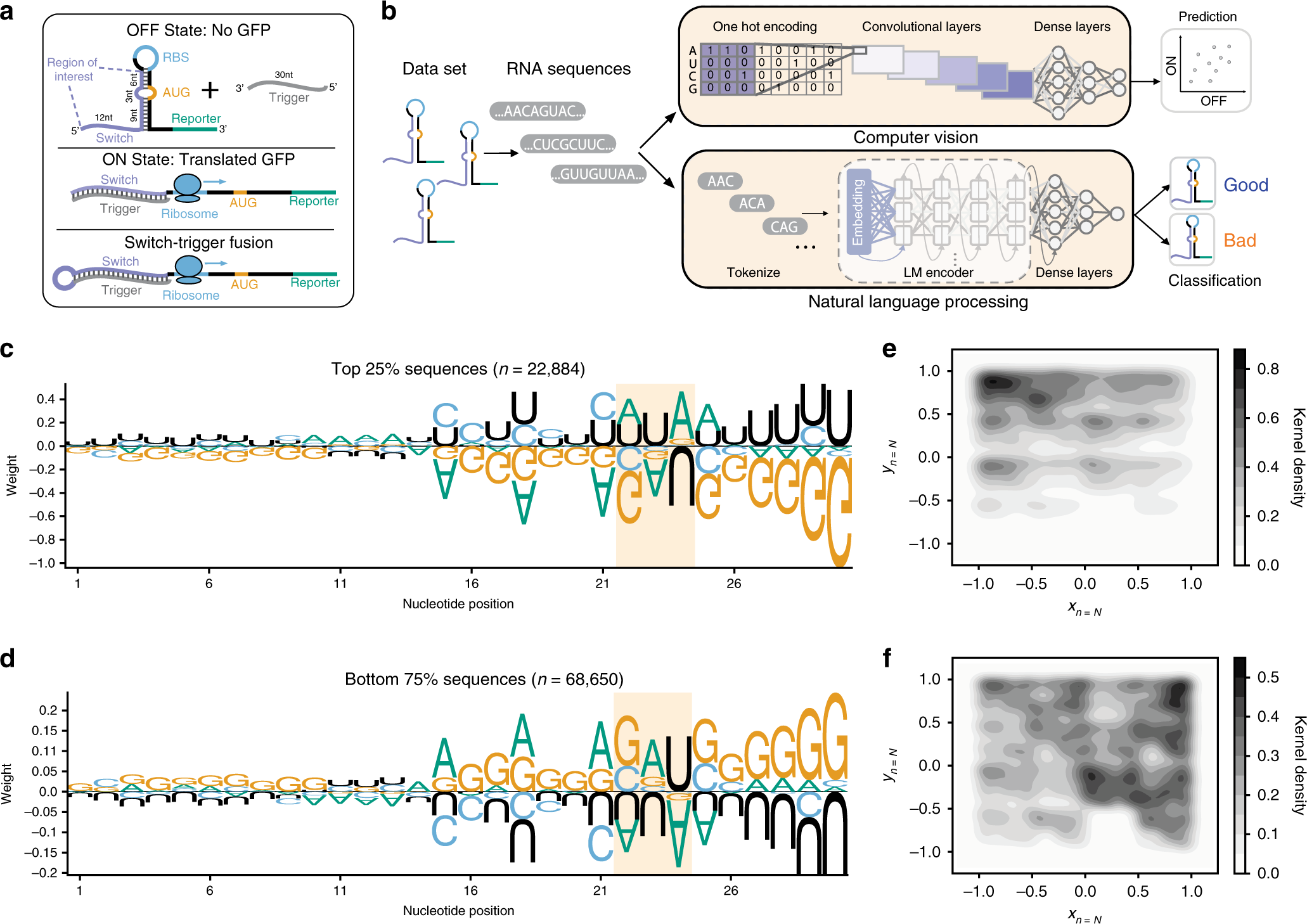

Attention — Seq2Seq Models. Sequence-to-sequence (abrv. Seq2Seq)… | by Pranay Dugar | Towards Data Science

NLP From Scratch: Translation with a Sequence to Sequence Network and Attention — PyTorch Tutorials 2.0.1+cu117 documentation